Was Sie schon immer über Process Mining wissen wollten, sich aber nie getraut haben zu fragen.

Unternehmen nutzen Informationssysteme zur verbesserten Abwicklung von Geschäftstransaktionen. Enterprise Resource Planning (ERP) und Workflow Management Systeme (WFMS) sind in dem Bereich die dominierenden Arten von Informationssystemen, die für die Unterstützung und automatisierte Ausführung von Geschäftsprozessen entwickelt wurden. Die Unterstützung und das Monitoring von Geschäftsprozessen wie Einkauf, operativer Betrieb, Logistik, Verkauf und Personalmanagement sind ohne integrierte Informationssysteme heute kaum noch vorstellbar. Die Integration von Informationssystemen ermöglicht nicht ausschließlich das Ziel der gesteigerten Effizienz und Effektivität. Vielmehr offenbart es neue Möglichkeiten für den Datenzugriff und die Analyse der erzeugten Daten. Diese werden bei der automatisierten Verarbeitung von Geschäftstransaktionen generiert. Mit Hilfe der Auswertung von Daten können beispielsweise bessere Entscheidungen getroffen werden.

Die Anwendung von Methoden und Werkzeugen zur Generierung von Informationen aus digitalen Daten wird unter dem Begriff „Business Intelligence“ (kurz BI) zusammengefasst. Seit einigen Jahren ist in dem Zusammenhang auch die Begrifflichkeit „Big Data“ geläufig. Im Grunde genommen handelt es sich dabei um die Anwendung von BI Methoden auf große Datenmengen. Die prominentesten Ansätze der Vergangenheit im Bereich Business Intelligence sind wohl das Online Analytical Processing (kurz OLAP) und das Data Mining. OLAP Tools ermöglichen beispielsweise das Analysieren von mehrdimensionalen Daten mit Operationen wie (Auszug Wikipedia):

- Drill-Down: Aggregationen eines Informationsobjekts auf detaillierte Werte herunterbrechen; „Hereinzoomen“

- Roll-Up: Gegenoperation zu Drill-Down; Verdichten auf höhere Hierarchiestufe (z. von der Monats- auf die Jahressicht)

- Slice: Ausschneiden von Scheiben aus dem Datenwürfel

- Dice: Hierbei wird ein kleinerer Würfel erzeugt, der ein Teilvolumen des Gesamtwürfels enthält. Dieses geschieht durch Teileinschränkungen auf einer oder mehreren Dimensionen.

- Split: Der Split-Operator ermöglicht es, einen Wert nach mehreren Dimensionen aufzuteilen, um weitere Details zu ermitteln (z. den Umsatz einer Filiale für eine bestimmte Menge von Produkten)

- Merge: Im Gegensatz zu Split wird hier die Granularität durch das Entfernen zusätzlicher Dimensionen wieder verringert.

Data Mining beschäftigt sich hingegen mit dem Auffinden von Mustern in großen Datenmengen. Die Flut an Informationen ist jedoch nicht immer ein Segen und kann auch schnell zum Fluch werden. Diese Phänomene werden auch Informationsüberflutung oder Datenexplosion genannt. In Kombination mit großen Datenmengen (Big Data) wird das Ausmaß dieser Phänomene schnell eindeutig. In Kombination mit der begrenzten Aufnahmefähigkeit von Informationen des Menschen stoßen wir daher schnell an Grenzen. Damit drängt sich schnell die Frage auf, wie ein Wachstum der Datenmengen so gehandhabt werden kann, dass die enthaltenen Informationen für den Menschen ohne Reizüberflutung zugänglich gemacht werden können.

Genau an diesem Punkt setzt Data Mining an. Es beschreibt die Analyse von Daten zur Auffindung von Zusammenhängen und Mustern in Daten, wobei die Muster eine Abstraktion der analysierten Daten darstellen. Durch die Abstraktion wird die Komplexität reduziert und Informationen für den Menschen zugänglich gemacht.

Das Ziel von Process Mining knüpft exakt an dem Punkt an. Die Extraktion und Darstellung von Informationen aus Geschäftsprozessen. Es umfasst Verfahren, Tools und Methoden zur Feststellung, Monitoring und Verbesserung von realen Prozessen, indem es Wissen aus sogenannten Event Logs extrahiert. Daten, die während der Ausführung von Geschäftsprozessen generiert werden, können somit für die Rekonstruktion der Prozessmodelle verwendet werden. Die entstehenden Prozessmodelle können wiederum für die Analyse und Optimierung der Prozesse verwendet werden. Damit stellt die Disziplin eine Querschnittsfunktion zwischen dem reinen Data Mining und dem Management von Geschäftsprozessen dar.

Prozessmodelle und Event Logs

Wie bereits erwähnt ist das Ziel von Process Mining die Konstruktion von Prozessmodellen anhand vorhandener Datenprotokolle (auch Event Logs genannt). Im Sinne der Wissenschaften ist ein Modell eine immaterielle Repräsentation eines Realweltausschnitts für einen bestimmten Verwendungszweck. Modelle werden für die Reduktion der Komplexität verwendet, indem Merkmale von Interesse angezeigt und andere weggelassen werden. Ein Prozessmodell wiederum ist eine graphische Repräsentation von Geschäftsprozessen, die Abhängigkeiten zwischen Aktivitäten beschreiben, die zur Ausführung von Geschäftsprozessen notwendig sind. Dabei besteht es aus einer Sammlung von Aktivitäten und Bedingungen zwischen den Aktivitäten.

Für die Darstellung von Prozessmodellen bestehen verschiedenste Notationen, auf die im Einzelnen nicht weiter eingegangen wird. Jede Notation hat seine Vor- und Nachteile, die stellenweise kontrovers diskutiert werden. Für diesen Beitrag verwenden wir die Notation der Object Management Group (kurz OMG), die für die Pflege und Weiterentwicklung der BPMN Notation verantwortlich ist.

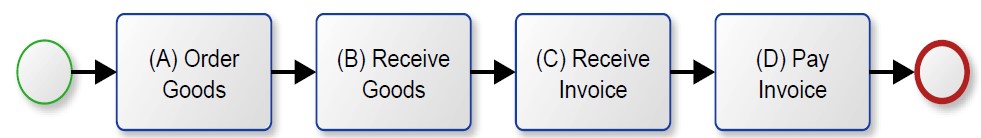

Abbildung 1 zeigt einen typischen und vereinfachten Einkaufsprozess. Es beginnt mit der Bestellung von materiellen Waren. Nach einer gewissen Zeit treffen die materiellen Waren im Unternehmen ein und es wird ein Wareneingang gebucht. Einige Tage später stellt der Lieferant eine Rechnung aus. Diese wird folglich bezahlt und der Prozess endet in diesem Szenario.

Der Prozess aus Abbildung 1 wurde manuell erstellt und stellt einen idealen Einkaufsprozess dar. Allerdings ist zu diesem Zeitpunkt nicht bekannt, ob der Prozess auch die Realität widerspiegelt. Es kann demnach in der Realität vorkommen, dass Rechnungen bezahlt werden, bevor die Rechnung überhaupt zugestellt wurde. Es stellt sich also die Frage: Wie bekommt man verlässliche Aussagen über die Ausführung von Geschäftsprozessen?

Ein Informationssystem speichert Daten während der Verarbeitung von Transaktionen in Log-Dateien oder Datenbanken ab. Im Falle einer Warenbestellung wird z.B. festgehalten, welche Art von Waren bestellt wird, die Menge, der Lieferant, der Zeitpunkt der Bestellung und vieles mehr. Diese gespeicherten Daten können aus den Informationssystemen als Event Logs extrahiert werden. Diese bilden die Grundlage für die Process Mining Algorithmen. Dazu im nächsten Blog Post mehr.

| Case ID | Event ID | Timestamp | Activity |

|---|---|---|---|

| 1 | 1000 | 01.01.2017 | Order Goods |

| 1001 | 10.01.2017 | Receive Goods | |

| 1002 | 13.01.2017 | Receive Invoice | |

| 1003 | 20.01.2017 | Pay Invoice | |

| 2 | 1004 | 02.01.2017 | Order Goods |

| 1005 | 01.01.2017 | Receive Goods | |

| … | … | … |

Wie man sehen kann ist ein Event Log nichts anderes als eine Tabelle. Es besteht aus allen Events, die sich durch Geschäftstätigkeiten auszeichnen. Dabei gehört jedes Event zu einem Fall. Der prozessuale Zusammenhang ergibt sich folglich aus der Zugehörigkeit von Events zu einem entsprechenden Fall. Anhand des Zeitstempels (Timestamp) können die Events in eine chronologische Reihenfolge gebracht werden. Die Reihenfolge innerhalb eines Falls wird auch Pfad genannt. Daraus kann ein Modell erstellt werden, dass aufgrund der Darstellung eines einzelnen Falls auch Prozessinstanz genannt wird. Ein Prozessmodell wiederum abstrahiert von dem Einzelfall einer Prozessinstanz und stellt das Verhalten aller Prozessinstanzen, innerhalb eines Prozesses dar.

Fälle und Events werden durch Klassifikatoren und Attribute charakterisiert. Klassifikatoren zeichnen sich durch ihre Eindeutigkeit aus und verknüpfen Fälle und Events einzigartig. Attribute hingegen speichern zusätzliche Informationen, die in Analysen Verwendung finden. Ein Beispiel für ein Event Log zeigt Tabelle 1.

Das Mining Verfahren

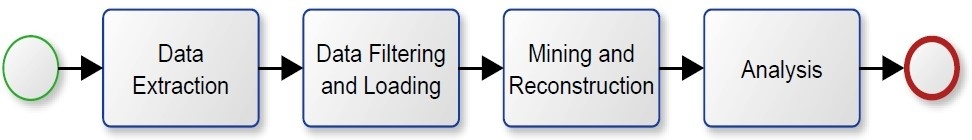

Abbildung 2 zeigt eine Übersicht der notwendigen Process Mining Aktivitäten. Bevor überhaupt mit Process Mining angefangen werden kann, muss der Datenzugriff gewährleistet sein. Diese Stelle kennzeichnet schon die erste große Hürde in Process Mining Projekten. Unter Umständen werden personenbezogene Daten extrahiert, sodass schnell der Betriebsrat oder Datenschutz einbezogen werden müssen. Die relevanten Daten müssen anschließend aus dem entsprechenden Informationssystem exportiert werden, um die produktiven Systeme nicht zu beeinträchtigen. Schon findet sich die zweite große Hürde. Der Export ist leider nicht trivial, da notwendige Daten für das Process Mining nicht geordnet und in einer tabellarischen Reihenfolge, wie in Tabelle 1 dargestellt, stehen. Hinzu kommt die enorme Datenmenge. Abhängig von dem Ziel des Process Minings müssen mehre Millionen Einträge aus den Datenbanken exportiert werden, sodass effiziente Methoden zur Extraktion notwendig sind.

Hinzu kommt die bereits angesprochene Vertraulichkeit. Abhängig von gesetzlichen Bestimmungen muss eine Anonymisierung oder Pseudonymisierung der Daten vorgenommen werden. Jedoch ist an dieser Stelle noch nicht Schluss mit den notwendigen Vorarbeiten. Die extrahierten Daten müssen zunächst bereinigt und für das Process Mining selektiert werden. Diese Tätigkeiten nehmen sehr viel Zeit in Anspruch und haben das ein oder andere Process Mining Projekt schon zum Scheitern verurteilt. Dieser Faktor darf daher nicht unterschätzt werden, da Informationssysteme nicht frei von Fehlern sind. So kommt es durchaus vor, dass Daten vorhanden sind, die keinen prozessualen Zusammenhang aufweisen. Der Ursprung von Fehlern / nicht validen Daten kann vielseitig sein und reicht von fehlerhaften Programmen, über bewusste Manipulation bis hin zu Hardware bedingte Fehler.

Wurden die Daten extrahiert, so wird ein spezifischer Prozess normalerweise innerhalb eines bestimmten Zeitintervalls untersucht. Demnach werden Datensätze, die über die Grenzen des Intervalls hinausgehen häufig „abgeschnitten“ und sind somit nicht Bestandteil des Untersuchungsgegenstandes. In der Folge werden sie gelöscht, oder idealerweise erst gar nicht aus dem System extrahiert. Werden die Daten nicht aus dem Event Log gelöscht, so können diese zu fehlerhaften Prozesszuständen führen. Die Event Logs hingegen enthalten nicht ausschließlich die Daten für einen bestimmten Prozess. Sie können durch eine Vielzahl an verschiedenen Prozesse angereichert sein. Daher ist auch in diesem Fall die Filterung von besonderer Relevanz. Bei dem Vorgehen ist allerdings Vorsicht geboten. Ansonsten kann der Untersuchungsgegenstand verändert werden und ggf. weitere Fehler, bis hin zu nicht validen Prozessinstanzen entstehen. Ein übliches Kriterium für die Selektion von Aktivitäten sind Aktivitäten, bei denen Sicherheit über die Prozesszugehörigkeit besteht. Die Extraktion und Filterung wird in aller Regel von Software unterstützt, kann aber auch manuell von statten gehen.

Sind die Daten erst einmal in der Process Mining Software, so kann die Rekonstruktion des Prozessmodells stattfinden. Das sogenannte Mining beinhaltet das Auffinden von Beziehungen in den Event Logs, sodass mit Hilfe der Rekonstruktion ein grafisches Prozessmodell erstellen werden kann. Das Mining und die Rekonstruktion werden dabei in aller Regel von einem einzigen Tool unterstützt.

Sobald das Mining und die Rekonstruktion abgeschlossen sind, kann das Prozessmodell für den entsprechenden Verwendungszweck benutzt werden. Ein wesentliches Ziel von Process Mining ist das Auffinden von zuvor unbekannten Prozessen. In einem solchen Fall ist die Rekonstruktion das eigentliche Ziel. Allerdings können Analysen darüber hinaus umgesetzt werden. Diese können von der reinen Prozessoptimierung, über organisatorische Abläufe bis hin zu Analysen der Conformance oder Compliance reichen.

Wie ein Mining Algorithmus funktioniert, welche Probleme dabei entstehen können, und wie die Ergebnisse verwendet werden, beschreiben wir im nächsten Blog Post. Wenn Sie mehr über den praktischen Einsatz von Process Mining aus Sicht der Revision erfahren möchten, dann stöbern Sie doch einfach mal in unserem Downloadbereich herum.

Der komplette Beitrag ist erstmalig erschienen: Gehrke, N., Werner, M.: Process Mining, in: WISU – Wirtschaft und Studium, Ausgabe Juli 2013, S. 934 – 943